Welcome to OnSIP’s guide to VoIP Fundamentals. Consider this your VoIP 101 class, where we break down the jargon and show you the myriad ways a cloud phone system can benefit your business. Throughout this section, we try to stay in overview mode, giving you the basics of VoIP as a service rather than detailing specific features of one provider versus another. We start with the basics of business VoIP and what to keep in mind when considering a switch from traditional desk phones to a cloud-based system. From there we get more detailed about typical VoIP features, technical specs, and where the industry is headed. Finally, we round out this section with a comprehensive glossary that clearly defines some common—and not so common—VoIP terms. We’ve linked to more in-depth reading throughout should you wish to learn more than what we’ve provided in the overview.

Part 1: Introduction to Business VoIP

Business professionals who are just now considering hosted VoIP might ask, "Is it reliable?" or "Is it high quality?" This is typically because they’ve had an experience with a free VoIP service, such as Google Voice or Skype—and you get what you pay for. Free VoIP services are engineered to minimize the provider's costs, which can lead to oversubscribed networks and quality degradation.

Hosted business VoIP offers high-quality, reliable, and affordable service with an abundance of features and capabilities.

Not only are many hosted business VoIP services reliable, but they offer high-definition voice technology and other capabilities, such as deep data integrations and video conferencing. These advancements, combined with the cost efficiencies of utilizing the same physical network that your computers do, drive hosted VoIP adoption.

If you’re just beginning to research hosted phone system solutions, start by considering the following:

- number of employees

- number of phones you anticipate

- number of conference phones you anticipate

- need to port phone number(s) or purchase phone numbers

- current phone usage (inbound/outbound minutes)

- access to broadband Internet

- must-have phone system features: voicemail, auto attendant, ring routing, business hour rules, call detail records, etc.

- must-have collaboration features: conference calling, text chat, etc.

- must-have call center features: automatic call distribution (ACD) queues, real-time dashboard reporting, recording

Want more? Click here for an extensive list of phone system features to consider. This information is a great starting point to expedite the quoting process with hosted VoIP providers.

Why Should My Small Business Switch to VoIP?

Deploying a cloud phone system is one of the best business decisions you can make. Why?

By switching to a cloud phone system, your business will gain many abilities that will help you compete with larger competitors.

You’ve probably heard the old saying, "If it ain't broke, don't fix it." If the technology gets from point A to point B without a problem, why replace it with something new? For instance, why would you replace your landline phone system if it can already make and receive calls without much of a hassle? And it may have crossed your mind whether a phone system is even necessary for your small business. (The short answer: Yes!)

Switching to VoIP is quick and easy. In fact, you can set up your entire phone system in less than an hour with a provider like OnSIP. And managing a VoIP phone system is much easier than dealing with an on-premise PBX!

.jpg?width=600&name=P2%20(iStock-916184718).jpg)

Read on for some compelling reasons to switch to a hosted phone system even if you think your current solution is "good enough."

Enterprise-Level Calling Features

It used to be that the most advanced calling features were reserved for big enterprises that could afford the required (and expensive) hardware. Now, due to vast improvements in technology, cloud phone systems offer these calling features at a much lower cost, making them readily available to smaller and leaner businesses.

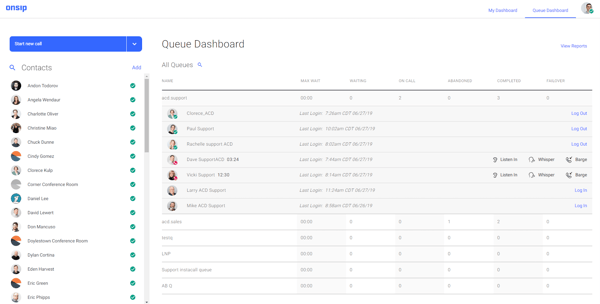

Mobile apps and softphones empower businesspeople to make and receive work calls and video conference when they're away from their desks. Call queues hold callers in line until staff members can speak with them. And while callers are waiting, you can broadcast informative company announcements, like sales promotions, videos, or customer testimonials. And queues also provide dashboards that track and display a wide variety of detailed call stats and call center analytics.

Impressive integrations with platforms like Salesforce and Zendesk enable staff members to work more efficiently and offer improved customer service.

Feature Additions and Removals

Once you've set up a cloud phone system, you can rely on it to grow with your business and provide the features you need when you need them. When a new employee is hired, simply add her right into the system's admin web portal and assign her a phone—you won't need to contact the provider's representatives and wait around for them to complete the tasks.

When shopping for a phone system, consider how your business will grow in the future. A cloud phone system gives your business a polished and professional way to connect with customers, clients, and vendors.

You can also configure new features, such as call queues and call recording, and purchase phone numbers on the fly; once they are set up, they are ready for use. And when you don't need them anymore, simply remove them from your account—the cloud phone system will adapt to your changing business communication needs.

Call Quality

A phone system that's just getting the job done probably isn't smashing any records when it comes to voice quality. But if it isn't total static, then what's the big deal?

HD voice, the leading voice quality standard for VoIP, offers at least twice the voice quality of landline phone service. Wideband technology transmits audio beyond the narrowband limit of 3.4 kHz, all the way up to 7 kHz. This expanded range results in excellent sound and speech quality: clearer phone calls and sharper, crisper voices. The G.722 codec, an industry standard for HD voice, broadcasts at more than double the typical landline phone.

Hosted phone system providers do not charge you for HD voice capabilities. HD voice is built into most VoIP phones and supported by many VoIP service providers (including OnSIP), so when you switch over, you'll get double the voice quality at no additional cost.

Scalability

If you're a business that plans to grow, the expansion of your phone system is always a concern. With landline services, adding new phones to your system is a potentially costly undertaking that will probably require the installation of new on-site hardware, cabling, and analog phones.

Hosted phone system solutions are built to be scalable in ways that landline phone systems are not. The process of adding a new phone to your business VoIP solution only requires you to connect an IP phone and an Ethernet cord. After plugging the Ethernet cord into both the phone and the wall jack, you have a fully operating phone at your disposal. The phone can then be automatically registered via an online admin portal that's accessible from a web browser.

No phone companies. No IT reps. The entire setup process takes less than 15 minutes, whereas adding a new landline phone could take days or weeks, depending on the phone company's schedule—and never mind the cost involved.

.jpg?width=626&name=P2%20(iStock-1017183520).jpg)

Reliability

Reliability is a difficult thing to "sell" someone on, especially if it requires you to give up your always-accessible physical PBX and trust "the cloud." But just as with scalability, a hosted VoIP provider's reputation is built on their reliability of service. You can take control and vet a provider by:

- asking a provider for references and case studies

- asking the provider about service interruption communications & procedures

- asking the provider for their "trust" page and monitoring it a bit

- asking the provider about their platform architecture and geographic redundancy

In most cases, we find that our customers are pleased with a higher level of reliability than their on-premise PBX, particularly because we utilize redundant routers, servers, Internet connections, and upstream carriers. We’re also proud of our patented SIP geographic scaling system, which works to transparently migrate customers around the OnSIP network and provide a more robust infrastructure overall.

Even in the event of an Internet outage on your network, OnSIP will be available and will route your calls to your failover destinations (e.g., voicemail.) Plus, you can log in to the OnSIP admin portal from a device on LTE and reroute your calls to cell phones or an emergency landline. Typically with hosted VoIP, you'll find more options and flexibility to ensure service availability.

Pricing

A phone system may be "good enough" in the sense that it's mostly functional. But that doesn't mean that it's saving you money. In fact, an older phone system installed years ago is not only behind technologically, but it may be costing you more time and money with regard to maintenance.

Some hosted PBX providers, like OnSIP, offer flexible pricing plans based on your phone usage. With a Pay-As-You-Go pricing plan, you are only charged for the features and minutes you use. This means you can avoid both being saddled with features you don't want and paying individual fees per phone. Under this plan, you can connect as many phones as you like without incurring extra cost.

On the other hand, most business VoIP companies also offer all-inclusive packages that come with unlimited minutes and a bevy of features. This is great for businesses with high call volume, such as call centers. The flat rate is convenient because you don’t have to budget for usage fluctuations, and you also pay less for features than you would if you purchased them singly.

No matter what kind of sector you're in, there's a significant chance your business will save money by switching to a hosted VoIP phone system.

Geographical Flexibility

According to Global Workplace Analytics, more than 4 million people work away from the office on a daily basis. Perhaps you have a hosted VoIP system that works just fine when you're at the store or office, but when you run home or take the day off, you miss important calls and messages because you need to be physically present at your work space to access your phone system.

With a hosted phone system, there is no physical location where everything is stored. As long as you have a working Internet connection, you can make and receive calls, access voicemail, take video calls, and change administrative options directly from an online admin portal. Your company extension will still ring through to your line, and it will be as if you never left the office.

Staff Productivity and Team Collaboration

One of the standout features that business VoIP offers is the softphone, a software-based phone available as a mobile app, desktop app, and browser-based app. Softphones equip employees with all of the features and functionalities of a business phone, giving them the means to make and receive work calls from their preferred devices.

Software phones, or softphones, bring the power of an office desk phone to your computer or mobile device.

But more than just business calling, softphones provide employees with productivity-boosting and collaboration-enhancing abilities. For instance, if a support rep doesn't know the answer to a caller's question, he can quickly IM his manager right in the softphone's interface and get the correct answer. Furthermore, one-on-one and team meetings can be held over video call right within the softphone's interface, encouraging team collaboration and cohesion.

.jpg?width=600&name=P2%20(iStock-939098810).jpg)

Employee Acquisition & Retention

Cloud phone systems are uniquely adept at uniting a distributed workforce, whether spread out over multiple offices or remote working from home. Since the "brains" of the service are securely stored and maintained by the provider, a business and its employees can access and use the service from any place that has an Internet connection: an office, home, or even the local coffee shop.

With that in mind, a business owner can look beyond the local city in which her business is located when hiring new staff and instead focus on acquiring the most talented and best-qualified person for the position. Regardless of where he happens to live, all that the new hire needs to connect to the phone system (and new colleagues) is a computer or laptop, phone, and Internet connection.

Match—and Surpass—Your Competition

A cloud phone system is one of the best business technologies that you can use to challenge bigger competitors. By using communication features that were once only available at the enterprise level, you'll equip your staff members with the means to work smarter, provide better customer service, and impress your clientele with the professionalism of your business.

A cloud phone system offers enterprise-level communication features that empower your business to challenge bigger competitors.

Considerations When Switching to VoIP

Switching phone systems is a daunting task for any business. It’s a hefty undertaking to be sure but not impossible. You should understand the factors that most affect your business so that when you research different VoIP providers, you choose the one that best suits your needs. We created a downloadable checklist that will help ensure that you’ve covered every aspect of switching to VoIP—from research through deployment. We’ve covered the main topics below.

On-Premise PBX Versus Hosted PBX

On-premise PBX means you have all the hardware for your phone system right there in your office. Hosted PBX means a third-party provider handles all of that, and you just have your phones with you. But which one is right for your business?

An on-premise PBX has a higher upfront cost because you need to purchase and maintain your own servers, along with SIP trunking so that you can connect your PBX to the PSTN. However, if you’re a large company with a dedicated IT department, this is a great option. Small to medium-sized businesses that don’t have the manpower and overhead to handle their own maintenance and repairs may prefer hosted PBX so that phone professionals handle any technical issues. All you need to get started with hosted PBX is a business-grade Internet connection.

.jpg?width=600&name=P2%20(iStock-653355524).jpg)

Internet Bandwidth

Not surprisingly, the key aspect of successfully using a hosted VoIP service is quality Internet connectivity. For multiple phones in an office location, you’ll need business-grade broadband with enough bandwidth. We rarely encounter businesses that don’t have "enough" bandwidth, but a hard and fast rule is: One VoIP call requires 100 kbps each way to be safe—200 kbps total. An average business can anticipate one call per ten employees at all times. So if you have ten employees, you need 200 kbps dedicated to VoIP traffic at all times. (It can be five to ten times this amount for video calling.)

If you’re supporting phones at home, residential broadband that allows you to stream video (e.g., YouTube) with negligible buffer times and hiccups is likely good enough.

You can verify that you are receiving the bandwidth promised by your Internet provider with this test. Check the upload and download speed against your ISP's Service Level Agreement.

.jpg?width=600&name=P2%20(iStock-950841682).jpg)

Internet Speed

Internet speed heavily factors into VoIP call quality. If it’s too low, call quality degrades, resulting in lags and breaks or fully dropped connections. A VoIP quality test is a simple way to gauge your Internet speed when preparing to switch to VoIP.

Ethernet speeds and support for power over Ethernet (PoE) can also affect the deployment of your phone system. While these aren’t the highest priority compared to features like HD voice quality, phone design, or number of SIP lines, you’ll still feel the impact of Ethernet speeds and PoE. PoE saves space and saves on setup and maintenance costs. Ethernet speeds will affect your phone service experience, from call quality to reliability.

Internet Uptime

Uptime refers to the amount of time an ISP’s (Internet Service Provider) Internet service is properly running. Downtime describes the opposite—when the service is inactive. Downtime isn’t always a bad thing; oftentimes downtime is planned for maintenance, and the ISP informs its customers in advance. But reliable uptime is crucial for VoIP consumers.

There are four levels of ISP uptime service, and the right one for your business depends on your individual needs, so it’s important to assess those in conjunction with your ISP.

Routers

Offices leveraging hosted VoIP need business-grade routers. A residential router will not cut it, simply based on the amount of traffic it needs to handle. (Some hosted VoIP providers require router configurations only available on business-grade routers as well.)

Call Quality

One of the biggest questions that organizations have is whether VoIP audio quality is as good as PSTN calls. The answer is that under optimal network conditions, VoIP calls are at least as good as analog calls, if not better. In fact, because VoIP calls are routed through enterprise-class networks, most of the factors that affect audio calls can be controlled by your IT department. User control is not possible with traditional PSTN calls.

However, there are a number of factors that can impact a VoIP call’s audio quality, particularly if the underlying data network is not robust. The speed of the Internet connection, the number of concurrent calls, the physical hardware, and the audio codecs used can all affect VoIP call quality. Latency, VoIP jitter, and packet loss are three important network metrics that are good indicators of call quality.

Phones

It’s best to use IP phones for hosted VoIP, meaning desk phones with Ethernet ports or software phones. With some hosted VoIP providers, you can use analog telephone adapters (ATAs) with plain old telephones. However, this limits the phone’s capabilities; for example, it will not support HD voice. If you’re switching to hosted VoIP and you plan to grow your business beyond just a few employees, we recommend that you test a business VoIP service with loaner phones and then purchase the IP phones that work best for you.

IP phones use SIP (Session Initiation Protocol), the de facto standard VoIP protocol. Be wary of any provider that requires you to purchase nonstandard phones or phones that are locked with their software. Purchasing standard SIP phones will give you the freedom to switch providers down the line, should you need to. Your investment will go farther.

Small to medium-sized businesses that don’t have a lot of manpower or overhead to handle PBX maintenance and repairs may prefer hosted PBX, where the service provider handles any technical issues.

VoIP also makes it possible to use softphones (software phones) instead of, or in addition to, physical desk phones. The phrase "mobile VoIP" refers to using an app that allows you to make VoIP calls. In other words, you can download a softphone app to receive all of your work calls, and your outbound calls will appear with your professional caller ID.

If you’re considering using mobile VoIP full-time, test the quality before you make your final decision.

Phone Lines

VoIP desk phones connect to your Internet network via Ethernet cables. Softphones and webphones connect to your VoIP phone system via wireless Internet. This allows you to access your business phone system from anywhere with an active Internet connection.

However, you’ll still need to figure out how much capacity your business requires, whether that refers to:

- the number of employees who need a phone

- the number of physical phones you need

- the number of inbound/outbound calls that your organization can handle at one time

- the number of phone numbers your business requires

- the number of simultaneous calls each person can accept

Phone Numbers

Switching to VoIP does not mean you have to lose your business phone number. The main instance where you actually can’t keep your existing number is when you move out of the area code region. Any reputable phone service will show you their available numbers, like we do here at OnSIP.

Changing your number is a hassle for any business—it appears more places than you might think, including obvious ones like advertising materials and your website. To be on the safe side, check phone number availability with a prospective provider before making the switch.

Cost Savings

Business VoIP’s primary advantage over traditional phone service is its vast cost difference. Fewer hardware requirements—none, if you opt for softphones—cut out initial costs. Besides the literal cost, VoIP considerably contributes to improved productivity, efficiency, and flexibility for employers and employees alike.

%20cropped.jpg?width=600&name=P2%20(iStock-668224090)%20cropped.jpg)

PCI and HIPAA Compliance

PCI (Payment Card Industry Data Security Standard) is a valuable security feature you should make sure your VoIP provider has while shopping around. Besides the obvious factor of knowing your credit card information is safe, a PCI-compliant provider is required to have a secure network and follow strong security practices, including constant monitoring for vulnerabilities.

It's no small chore to establish HIPAA compliance; that's why few hosted VoIP providers have performed the required policy and procedure improvements, documentation, employee training, ongoing monitoring, and physical security audits. Some, however, have taken this step. By being certified to sign the Business Associate Agreements that HIPAA requires, providers assure customers that they take on responsibility for compliance as regards their voice and video platform. In the process, they extend to healthcare the considerable benefits of cloud communications that non-regulated industries have enjoyed for years.

Ease of Setup

VoIP—particularly hosted VoIP with softphones—is incredibly easy to set up. With many providers, OnSIP included, you don’t need to have someone come in to set up a complex system of wires or devote lots of time to onboarding individual users. For a small or medium business setting up their own phones, the whole process should take less than an hour.

Enhanced Business VoIP Features

Businesses that utilize VoIP increasingly rely on advanced functionality to conduct their day-to-day operations. In today's market, a robust hosted PBX platform offers its customers not only a solid foundation of classic features but also a bevy of powerful pioneering capabilities that allow its users to empower and customize their phone system.

Between video calling and groundbreaking voice quality, the top hosted PBX services are pushing the boundaries of how users communicate. Here are some advanced business VoIP features that companies should consider for their evolving needs.

Click to Call

In sales and service, seconds matter. Arm your staff with the ability to make more calls by deploying simple click-to-call plugins in their browsers. Plugins highlight all phone numbers within a browser window and make them clickable. For employees who earn more when they call more, these easy-to-use widgets are a great productivity tool.

Embedded Web-Based Chat

For cost-cutting and talk avoidance purposes, many big businesses have employed automated menus, bots, and self-help DIY articles at the forefront of their customer service. Think about your own experience of calling a business's customer support: You dig for a phone number, navigate an unforgiving attendant menu, and remain stuck on hold. And with the emergence of live chat, how many times have we mumbled to ourselves, "I know I'm responding to a bot here!" Make your business stand out by providing a pleasant calling experience for leads and customers.

Video Conferencing

If you can’t be in the same room as your customer, take full advantage of video conferencing technology. Many cloud-based phone system providers offer video conferencing, which allows you to create better rapport, build tighter relationships, and have more effective meetings. Face-to-face conversations are more personal and efficient than simple audio conference calls.

The number of people who work from home in any capacity has increased 80 percent since 2005 to 30 million. That's why the best hosted PBX platforms are honing their video calling offerings. They want their customers to stay in sync with this increasingly crucial demand.

With technology like WebRTC, the barriers between phone systems and Internet browsers are being erased entirely. Solutions such as the OnSIP app allow users to engage in video calls with any compatible device without requiring them to install any downloads or plugins. This is the future of video calling: instant, easy, and hassle-free.

Many cloud-based phone system providers offer video conferencing, which allows you to create better rapport, build tighter relationships, and have more effective meetings.

HD Voice

Today's premier VoIP platforms are giving users voice quality that make phone calls seem more like in-person conversations. HD voice is a wideband audio technology that uses codecs of a greater frequency range on the audio spectrum than conventional telephone calls. The PSTN and a majority of VoIP codecs capture at 8 kHz, while a wideband VoIP implementation might capture at 16 kHz.

Our own HD voice operates on the G.722 wideband codec. G722 provides enhanced speech quality because of a wider speech bandwidth (50–7000 Hz) when compared to narrowband codecs like G.711 (optimized for landline use at 300–3400 Hz).

Presence

A long-time telephony feature, presence among coworkers is ever more crucial for teams working together in dispersed locations. The traditional implementation of presence is a phone feature called busy lamp field (BLF), which lights up the phone lines or somehow visually indicates on a screen when your coworkers are on the phone.

Today, leading VoIP services take presence a step farther by integrating it in unified communications applications. For example, OnSIP’s mobile, desktop, and web apps give users the ability to see whether their team members are on the phone, initiate calls with a mouse click, manage voicemail, and chat. It doesn't matter where you are; an employee in New York can see whether a remote worker in Arizona is busy or on the phone. This keeps a company's phone network streamlined and cohesive.

Simultaneous Logins

Work is not a place we go to; it’s a thing we do. Having a softphone both on the smartphone in your pocket and on your computer, as well as desk phones at your home office and workplace, empower your customer-facing staff to be in constant contact with your customers no matter where they choose to work. Your cloud-based phone system should allow staff members to have as many devices connected at one time as needed and not limit the number of simultaneous logins.

Third-Party Integrations

The top hosted PBX platforms generally invite third-party integration, either as a part of their general offering or at the behest of the user. Integration allows for other critical business platforms to interoperate with VoIP functionality, which gives users access to faster calls, greater support, and advanced CRM. We integrate our platform with services such as HubSpot and Zendesk to streamline sales and support processes for our users.

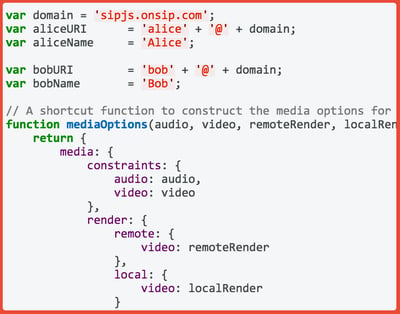

Resources for Developers

The most advanced hosted PBX solutions on the market actually open up their own platforms for interested developers. This allows users to get underneath the hood of their VoIP service and leverage real-time call data with their own platforms or even build complementary communications features to the solutions they offer.

SIP.js, our JavaScript SIP library, was built to give developers full access to the OnSIP platform. With a SIP-centric command structure, the library was tailor-made for real-time communications developers who want to combine the power of SIP with the capabilities of WebRTC. We also offer hands-on SIP.js documentation for support.

Free VoIP Phone Service Resources

A free IP phone system allows you to communicate with your team through voice calls, video calls, and instant messaging (IM) at no cost.

A free phone system has its limitations, including not being able to call regular landline phone numbers. But free IP phone systems ultimately serve as an excellent platform that can connect your team members through high-quality voice, video, and messaging.

Here are some additional perks that free VoIP phone systems can offer for team-based communications.

Webphones & Softphones

Most IP desk phones cost between $50 and $150 per unit. But some VoIP service providers offer webphones and softphones as alternative solutions. These phone apps are usually powerful enough to operate as full replacements for your desk phone.

With the ability to make and receive calls directly in a web browser, webphones harness new technologies to open up more possibilities within standard business communications.

A webphone runs inside your browser window; a softphone is a standalone app on your PC or mobile device. Webphones and softphones are built to mimic desk phone capabilities. They have features such as contact directories, call transfer/hold, dialpad, and other familiar calling functions.

Some providers offer proprietary webphones or softphones for free. Others allow you to use third-party phone apps with their own services. These third-party apps typically have a small licensing fee that costs much less than the average desk phone. It's entirely possible to furnish your team with webphones and/or softphones for free and avoid spending money on desk phones.

In-Network Calls to Colleagues

One of the top features of a free IP phone system is free in-network calling. You can call anybody who's on your phone system without incurring charges. This allows you to communicate with all of your coworkers while never having to worry about racking up costs.

This is especially helpful if your organization has multiple locations. The back-and-forth calling between your offices will never cost anything. Free extension dialing also makes it easier for you to call your coworkers internally without requiring you to dial your main number.

This feature is commonly referred to as free SIP-to-SIP calling, SIP being the protocol that powers many VoIP services. However, you'll pay per-minute costs for calls that are not SIP based, including calls made to landline phones. But you can always use a free phone system exclusively for your office so that your team can communicate internally.

You can also connect to most free IP phone systems wherever you are, whether you're on the go or working remotely. Once you connect to your phone network, you have full access to in-network calling, presence, and other key features, no matter where you are geographically. All you need to access your office phone system is an active Internet connection and a compatible webphone, a softphone, or a desk phone.

.jpg?width=600&name=P2%20(iStock-925689786).jpg)

Web Calls & Video Chats

Some VoIP phone systems offer voice and video web calling at no cost. This allows you take VoIP calls from your team, or anyone using a webphone, for free. In other words, in-network calling and a solid portion of out-of-network calling can be free for select VoIP phone systems. And contrary to other free voice apps online, VoIP carriers offer HD quality voice audio for calls.

Free video calling enables one-to-one video chats with team members. Video calls give a visual presence to coworkers who are remote or in another office. Cloud phone systems with video calling save you the hassle of having to use a third-party app like Skype. Video calls on cloud phone systems can also deliver HD-quality streams, such as H.264, that surpass rival services like Google Hangouts.

Instant Messaging

Many cloud phone systems come with unified communications features such as instant messaging. Typically, IM is offered as part of a webphone or softphone app. Some IM services are proprietary, while other cloud phone systems use third-party integrations with apps such as Slack.

Text chats give your team another way to collaborate internally for free. In some situations, instant messaging is preferred to voice and video. For example, when your coworker is busy with other concerns, a quick message is less disruptive than a phone call. An IP phone system with voice, video, and IM is a one-stop platform for all your team's internal communication needs.

.jpg?width=600&name=P2%20(iStock-598912704).jpg)

Part 2: How to Maximize Your Cloud Phone System

Today's cloud phone systems do much more than just direct calls and light up buttons on a desk phone. Addressing rapidly changing business needs, their service platforms offer voice and video calling, mobile communications, IM/chat, video conferencing, integrations with CRM and help desk software, screen sharing, SMS, and so much more.

Considering the fact that cloud phone systems provide all of these productivity-boosting features, ask yourself an important question: Are you doing work that your phone system can handle?

Keep reading to discover how you can maximize your cloud phone system, slashing your work tasks and boosting your efficiency in the process.

Manage Your Cloud Phone System With an Admin Portal

Cloud phone systems come with online admin portals that provide all the tools you need to be an effective administrator. Rather than having to contact a phone service rep and wait for him to get back to you, you can control any moves, additions, or changes to your system in real-time. The cloud phone provider acts as backup support. If you need to add a line for a new employee, update your E911 locations, or make any minor adjustments to improve your telecom experience, you have that power at your fingertips.

Web-based admin portals equip you with an extensive amount of tools and abilities to successfully administer your business's phones, without waiting for a phone service representative to get around to your requests.

Display Key Caller Data With CRM Integrations

Cloud phone systems offer integrations with an assortment of CRM platforms, including Salesforce, Zendesk, HubSpot, and SugarCRM. These integrations enable the CRM service to use the data in your phone system, and vice versa.

The benefit of using CRM integrations is that they will equip your sales and support reps with useful caller data even before a call is answered. Reps won't have to ask for a caller's name, company name, or account number. When a call comes in, a popup notification will appear on the rep's computer, supplying caller ID information and web links to the caller's record in the CRM service. Armed with this data, the rep can greet the caller and immediately focus on her issue.

Calls can even be automatically logged into the caller's CRM record without the rep having to manually enter any information. The date and time of the call, call length, support ticket number, and other pertinent info will be saved in the caller's record, allowing your reps to quickly type in a few notes and then move on to the next call or case.

Keep Callers on the Line With ACD Queues

An ACD queue is a feature that holds callers in line until the next available agent can answer the call. It is critical to ensuring that callers don't hear busy signals or get sent to a voicemail box when all of your agents are occupied.

While callers are waiting on hold, take advantage of that time to present engaging information. In addition to music on hold, cloud phone systems provide other options that keep callers on the line. Use a customized on hold announcement to inform callers of a new product or current sales promotion. These announcements can help to reinforce your brand and keep customers informed when new products are released.

.jpg?width=600&name=P2%20(iStock-179260220).jpg)

Automatically Email Queue Reports to a Queue Supervisor

ACD queues also record essential queue performance data on call and agent activity (average call wait time, maximum call wait time, average call duration per call agent, etc.). Queue supervisors simply select the dates to review and can then generate reports that display historical queue statistics.

But instead of having the supervisor manually log into a web portal and run these reports, cloud phone systems can automatically email them at specified time periods. For example, OnSIP customers can configure queue reports to be emailed every day, week, month, or quarter, and even receive real-time alerts when an issue requires immediate attention. The supervisor can then access these reports right from an email inbox, saving time and allowing him to review them even when away from a computer.

Listen to Voicemails From an Email Inbox

Retrieving voicemail messages can be a pain. You have to pick up a phone, remember PIN numbers, and navigate menu prompts. And if you have to replay a message, make sure you press the button that replays it, not the one that deletes it!

Configure voicemail to email for an effortless way to review your messages. This feature automatically sends every voicemail to a customer-specified email address.

In addition to cutting out the hassle of dialing into a voicemail manager, voicemail to email gives mobile and remote workers the ability to review their messages wherever they are working: at home or even while commuting into work on a bus or train. And because voicemails can be retrieved from an email inbox within seconds of when they are left, staff members can call the customer or lead back while the issue is still top of mind.

Get Accurate Billing Data From Call Detail Records

Do you bill clients per phone call or per phone consultation? Cloud phone systems keep track of every call that is made and received by your account, updating records in real-time. These call detail records provide comprehensive information such as date and time of the call, call length, source and destination phone numbers, and much more.

Instead of manually tracking every call that is made to and from each client, rely on a cloud phone system to store call details. Account administrators can access call data at any time by logging into the system's web portal, selecting the time period to review, and generating the report. These reports will show the call data that you need to accurately bill your clients. And if you need to further examine the data, you'll have the option to download the reports as a CSV file.

Pass Along Basic Business Info to Callers With Auto Attendants

An auto attendant is a prerecorded greeting that answers each call made to a business. It states the name of the business, thanks callers for calling, and provides callers with menu prompts to reach specific people or departments. Try setting up an auto attendant to assist your receptionist.

An auto attendant helps to provide basic business information to callers. For example, one of the menu prompts can play a recording that states your business's street address, hours of operation, and driving directions so that your receptionist won't have to relay the same information over and over again. Another prompt can present callers with a dial by name directory that allows them to reach a specific employee. If callers need to speak with the receptionist, simply configure the auto attendant so that dialing "0" will call the receptionist.

By automating certain information in an auto attendant greeting, you'll free up your receptionist's time by cutting down on the calls that she has to answer.

Part 3: Desk Phone & Softphone Basics

Desk phones and softphones have some features in common—most importantly, the ability to make calls—but the former is hardware and the latter is software. To put it in other terms, desk phones are physical hardware that stay—you guessed it—on your desk. Softphones are apps that you can download to use on browsers, desktop computers, tablets, or mobile devices.

Softphones have evolved to rival the most advanced desk phone, eliminating the need for expensive hardware that ties employees to a specific location. As long as the employee has the softphone downloaded to a device of her choosing and a solid Internet connection, she has the same telecom abilities she would at her desk.

.jpg?width=600&name=Top%2013%20Questions%20You%20Should%20Ask%20Featured%20Image%20(iStock-956392496).jpg)

Landline Desk Phone Overview

Landline desk phones are often an essential part of modern work life, but they have quite a few disadvantages. Conventional desk phones:

- Tie to you to your desk: Phone systems that keep you tied to your desk are woefully inflexible. But with a cloud phone system that works across all devices, you can take calls wherever you go.

- Force you to follow a standard welcome script: Do you have to answer every phone call by reading out a standard script? These messages are inevitably repetitive and force you to repeat the same language day after day. Auto attendants are automated welcome messages that direct callers to navigate through a phone menu to find the correct department or person.

- Offer poor sound quality: Poor call quality can seriously disrupt your conversations. Most desk phones used in the United States are built with substandard voice capabilities. These phones harness landline phone systems that cannot reach high-definition sound quality limits—it’s the difference between sounding like your voice is traveling over a phone line versus sounding like you’re in the same room with the other caller. Cloud phone systems all come with HD voice, an audio codec that offers a voice range more than twice the size of landline systems.

- Take up too much desk space: Sometimes desk phones just take up too much space. If you're dealing with limited real estate, replacing your desk phone with a mobile option adds more free space quickly.

- Don’t allow video conferencing: Most desk phones cannot make or receive video calls. If a client wants to use video, you may have to use a noncommercial platform of middling quality if your desk phone lacks a camera. Several softphone apps come with free video calling. They operate directly from your laptop or mobile device, harnessing their built-in cameras and microphones. This allows you to get audio and video calling from a single app and at a fraction of the cost of a desk phone.

- Makes it difficult to scroll through the directory: Picking your way through complicated menu options on a desk phone is time consuming. We live in a world where smartphones allow for instant touchscreen simplicity. But most desk phones require you to manually press a button to move through a menu. A softphone allows you to click through menus, dial pads, and all other parts of the phone with ease.

VoIP Desk Phone Basics

For all of these reasons and more, VoIP desk phones are miles ahead of landline desk phones. If you’re concerned about making the switch to VoIP because of the new technology learning curve, let us put that worry to rest. The two types of phones are practically identical, and you might even be unable to tell which is which at first glance, but the inner workings of VoIP desk phones far surpass regular wired desk phones.

Because VoIP desk phones make calls through the Internet, there’s no need to call the local phone company or set up expensive copper wiring for a new desk phone: Simply plug the phone in and add the SIP address to your service provider’s admin portal. VoIP desk phones have HD voice, which provides at least twice the audio range of regular desk phones. Regular landlines have one number (or line) per phone; VoIP desk phones, on the other hand, can have several numbers associated with the same piece of hardware. And when it comes to VoIP security, landline phones tend to have more potential security risks than VoIP phones.

VoIP Softphone Overview

Softphone apps bring all of the functionality of a telephone onto a computer or mobile device. With a business softphone, users can log into their account on any device and be instantly connected to their business phone line. This flexibility allows someone to work from anywhere with a good Internet connection. As such, softphones are excellent for remote or mobile workers.

.jpg?width=600&name=P2%20(iStock-513476592).jpg)

Keep in mind that a software phone needs to have high-quality microphone and speaker capabilities to support the full HD voice that VoIP desk phones enjoy. With desk phones, the hardware is built to maximize audio quality. Additionally, a great Internet connection is needed for softphones to get high-quality HD audio. Otherwise, call quality could be disrupted.

Softphones are particularly advantageous for small business employees constantly on the go or working from client sites. Video call capabilities combined with modern mobile devices mean employees can video conference in coworkers to see exactly what they’re working on, even from the middle of a worksite. And instead of dialing in to check voicemail, they can check messages just like email any time they look at their smartphone.

Softphones are invaluable communication apps because they allow mobile and remote workers to use the business's cloud VoIP service while away from the office.

For remote workers, softphones provide the same functionality without having to set up expensive desk phone systems at home or worrying about staying in the loop if working from a coworking space. And advanced call forwarding, set up in the admin portal, means mobile employees can be reached on whichever device they have, wherever they are.

The best-known business calling features—call transfer, call hold, busy lamp field, mute, and three-way conferencing—are replicated in VoIP softphones. So with regard to standard calling features, desk phones and softphones are comparable, although softphones do offer several advantages over desk phones. But to replace a desk phone, a softphone app must do more than simply make calls to and from the Public Switched Telephone Network (PSTN).

To help you evaluate softphone options for your business calling needs, we’ve created a list of essential softphone features.

1. Contact Directory

One of the most important business softphone features is the ability to see a list of all the contacts in your organization. Having a complete directory by name makes it easy to find and reach coworkers, especially if you have several office locations or departments.

Some softphones also allow you to create your own custom contact list from the complete directory. This feature makes it convenient to contact the people you work most closely with, rather than having to search or sort through a long list of names.

When testing different softphones, find out how easy it is to upload a list of contacts and add a new contact. Ideally, the directory should sync automatically to your business VoIP account and eliminate the need to manually update the directory each time someone joins or leaves the company.

2. Extension Dialing

In addition to being able to call coworkers via a company directory, a business softphone should be able to connect you to coworkers via extension dialing. If you’re used to dialing extensions on your office desk phone, this feature will be very handy. Instead of having to search for a person’s name on a list, you can initiate a call with a few simple keystrokes. This feature is especially useful when joining conference bridges, where team members are usually given a four-digit extension to dial.

3. Call Transfer

You’ll also need the ability to transfer calls easily from one user to another. A business-grade softphone should be able to transfer a call to another extension, a ring group, or an outside line.

When trying out different softphones, note how many steps it takes to initiate a transfer. Can you simply drag and drop a call to transfer it to someone else? How many seconds does it take to complete a transfer? Selecting a softphone with a smooth user experience will make your employees’ lives much easier.

.jpg?width=600&name=P2%20(iStock-812948018).jpg)

4. Call Hold

Putting a caller on hold is a simple yet critical feature for businesses that receive simultaneous incoming calls. Your softphone app should be able to manage multiple calls easily and display how long someone has been on hold so that you can keep track of which calls need to be addressed first.

5. Caller ID

Caller ID is one of the most relied upon features of business phones. There’s a huge difference between answering a call from a friend, a manager, and an unknown number. Being able to identify your caller can determine how you deal with the call and is an invaluable feature for sales and support staff.

While most softphones will display some form of caller ID, it may not include all the information that you need. For example, does the softphone app include both the name and phone number of the caller? Are you able to tell if the number is private, unknown, or international?

6. Three-Way Conferencing

Occasionally, the need will arise for a third person to join in on a call. While most desk phones have the ability to engage in three-way conferencing (a.k.a. a three way call), not all softphones can do the same. When testing out potential softphone apps, ask if three-way conferencing is available.

Note that this is different from a conference bridge or conference suite. Three-way conferencing allows multiple callers to engage in a call with a single user, whereas a conference bridge is a separate extension that all callers dial in to join the call.

7. Presence

For teams who work in different offices or remotely, it’s important to know whether a colleague is online or not. Being able to see someone’s real-time status on a softphone is a helpful indication of their availability. At the very least, a softphone should distinguish between someone who is online, offline, or on a call.

With presence, a sales or customer service manager can quickly glance at the list of contacts to see which agents are currently on the line. A receptionist can check whether an executive is currently available or busy, and a coworker can verify that a conference bridge is open before inviting others to a team meeting.

8. Volume Control & Mute

When you’re calling in from a remote location, you might not always be in a quiet place. That’s why it’s essential for softphones to have volume controls and a mute function, which allows you to stop sending your audio feed to other callers on the line.

9. DTMF (Dialpad)

Many softphones feature a dialpad as the main interface for initiating calls, but others do away with it in favor of a more "modern" look. However, the dialpad is an important feature when dealing with phone menus or entering conference PINs.

10. Video Calling

Arguably one of the most valuable features of a softphone app is video calling. This collaboration feature allows employees working remotely or in different offices to see each other in person using their computer’s webcam. A softphone app should distinguish between these two types of calls in the user interface and allow users to enable or disable their video feed at any time during the call.

.jpg?width=600&name=P2%20(iStock-1059661680).jpg)

Part 4: WebRTC Essentials

WebRTC is a set of JavaScript APIs that allows organizations and developers to add real-time communications (RTC) features to their browser applications without having to deal with the inherent complexities of requiring downloads or plugins to use them.

Many of the devices we use on a daily basis—mobile phones, tablets, some desk phones, and personal computers—are connected to the Internet. With WebRTC, all of these devices can exchange voice, video, and real-time data seamlessly between one another on a common platform.

The three major components of WebRTC are:

- MediaStream: Otherwise known as getUserMedia(), MediaStream allows the browser access to local media devices such as the camera and microphone. It also allows the browser to capture media. Read more about MediaStream.

- RTCPeerConnection: Allows browsers to connect directly with other browsers (peers). Read more about RTCPeerConnection.

- RTCDataChannel: Allows browsers to exchange data peer to peer. Read more about RTCDataChannel.

A Brief History of WebRTC

About a year after the launch of Google Chrome in 2008, the idea for WebRTC came about when the Chrome team began to look for functionality discrepancies between the web and the native desktop. They soon realized that there was no satisfactory solution for real-time communications.

At the time, RTC in the Web browser meant either using Flash or plugins. Applications that ran on Flash offered experiences that were low quality and required server licenses to run. Plugins were not only a hassle for end users to install but also for the developers creating them. Maintaining the life cycle of plugins became a resource strain as organizations needed to deploy, monitor, and update different versions for different browsers across several operating systems.

In June 2011, Google released WebRTC as an open-source project after acquiring both On2, the creators of the VP8 video codec, and Global IP solutions, a company that was already licensing the low-level components needed for WebRTC, in 2010. Since then, many other companies have made contributions to the WebRTC project, including Mozilla, Ericsson, and AT&T.

Mass implementation of WebRTC stalled over confusion around standards and some major browsers holding out on supporting it. Microsoft waited until they released their new browser, Edge, and Apple came onboard after Safari 11’s release in 2017. With standardized support across the major players in web browsing, WebRTC can finally enter a new phase of growth. Combined with recent AI developments and the consumer market on the verge of adopting 5G, WebRTC growth is poised to accelerate at an unprecedented rate.

Today, there are already well over 1 billion potential WebRTC endpoints, and that number is rapidly growing.

WebRTC allows Internet-connected devices to exchange voice, video, and real-time data seamlessly between one another on a common platform.

WebRTC Development

WebRTC development, like any other form of software development, is shaped by the technical possibilities of a given technology. In the case of WebRTC, the constraints are relatively simple. The code is in JavaScript, the functionality is powered by three APIs built into Chrome and Firefox, and the mechanism that connects WebRTC peers (i.e., browsers) is generally powered by a prebuilt signaling platform such as OnSIP's. Coding is of course handled by the developer. But WebRTC’s built-in browser functionality and support and OnSIP’s preexisting signaling network take care of the other two components of WebRTC development.

JavaScript and HTML5

The construction of WebRTC applications is powered by JavaScript. This makes the process of coding fairly straightforward for most developers who are acquainted with standard web languages.

The APIs involved in WebRTC development—getUserMedia(), RTCDataChannel, and RTCPeerConnection—are built into the Chrome and Firefox browsers. The commands that these APIs utilize are generally commonsensical [≠ex: stream.getVideoTracks(), mediaStreamSource.connect()] and are referenced by reputable documentation across the Internet. In this sense, the learning curve for the average web developer is slight. WebRTC was never designed to be an experts-only technology. It’s meant to be used widely by developers of all stripes to solve real-time communications problems in their apps.

In terms of required software, WebRTC development demands nothing more than programs that can read and code HTML5. VIMs and eMacs, or any standard text editor, can be used to write HTML, JavaScript, and CSS. Then Chrome or Firefox can open the code to run the program, while debuggers such as Chrome’s DevTools can pick apart errors. In short, WebRTC development requires nothing more than the free, standard programs that most web developers use on a daily basis.

.jpg?width=600&name=P2%20(iStock-939787416).jpg)

Built-In Browser Functionality & Support

WebRTC is an open-source project spearheaded by the Google Chrome Team. WebRTC’s three APIs are currently built into a variety of browsers, including Chrome, Firefox, and Safari. There are no fees or licensing requirements for any component of WebRTC development. This means that every Chrome, Firefox, and Safari browser is equipped to stream real-time media without requiring developers to build complex APIs, license or construct codecs, or negotiate communication between different browsers.

Security

Like any bit of tech that handles private information, WebRTC is obligated to maintain security measures that protect users from malicious attacks. WebRTC uses the standard encryption methods that have been proven to work again and again. Because opening up routes of communication paves the way for security breaches, WebRTC allows developers to build end-to-end encryption, thereby scaling security measures alongside advances in streaming communication features.

Signaling Platform: Putting WebRTC Development All Together

You’ve built your WebRTC-based application. You have a supported browser to run the app. Now you just need a mechanism that can get peers (i.e., browsers) to communicate with each other and share media. This is where a signaling platform, such as OnSIP's, enters WebRTC development.

WebRTC Signaling

Session Initiation Protocol (SIP) is an open, standards-based signaling method that negotiates multimedia sessions (i.e., voice and video calls) over the Internet. SIP creates the messages that initiate and terminate multimedia calls between two endpoints. With its specialized structure, SIP is uniquely situated to broker WebRTC connections between two browsers. SIP and WebRTC are the proper combination for developers who want to build scalable, reliable, and durable communications applications.

WebRTC signaling allows users to exchange metadata to coordinate communication, while SIP creates the messages that initiate and terminate multimedia calls between two endpoints.

The SIP WebRTC Combination

SIP offers several advantages over other signaling methods. As an open standard, SIP is consistently compatible with desk phones, cell phones, computers, tablets, and a host of other devices. Unlike other standards, SIP’s interoperability with the Public Switched Telephone Network (PTSN) has been established for years. This means that developers can use SIP and WebRTC to allow users to call a business’s desk phones directly from an Internet browser, all without requiring any plugins or downloads. This is immensely helpful to businesses that want to have direct and immediate access to their customers.

The SIP community has had a thriving user base for decades. Businesses of all stripes have relied on SIP to build everything from massively scalable VoIP platforms to chat applications with simple video capabilities. With its open architecture, the SIP protocol is steadfastly maintained by the vast group of engineers who rely on its continued prosperity. Additionally, SIP doesn’t require any licensing fees to use. WebRTC and SIP are both nonproprietary protocols that can be used freely by anyone in the world.

OnSIP for Developers: SIP and WebRTC in One Platform

The SIP protocol powers OnSIP’s massive, geographically distributed business VoIP platform that services more than 100,000 small and medium-sized businesses. OnSIP's WebRTC signaling platform was built atop this sophisticated architecture to harness undersubscribed proxies that are already primed to scale applications, bridge compatibility gaps between endpoints, broker connections behind firewalls, and track and report communications. These are the types of crucial features that platforms based on SIP and WebRTC can enable.

SIP and WebRTC are a powerful combination that can be fully realized with platforms such as OnSIP's. We've used the same platform to build all of our WebRTC-based products. By using our SIP-based VoIP network, we were able to completely integrate WebRTC into our platform.

WebRTC Audio & Video Calling With SIP

WebRTC to SIP calling is a preeminent possibility for any developer who utilizes the WebRTC APIs. When implemented on a mature SIP platform like OnSIP's, WebRTC applications can essentially operate as phones within the browser.

Audio Calls

Not too long ago, the idea that somebody might be able to call the Public Switched Telephone Network (PSTN) from an Internet browser without downloading any plugins seemed like a pipe dream. But with the advent of WebRTC, this scenario has become a reality.

Users can now call standard desk phones directly from their browser, without requiring any plugins or downloads. WebRTC to PSTN calling bridges the gap between older and newer forms of telecommunication, and the best part is that users can do it with the click of a button.

Video Calls

WebRTC video currently powers everything from basic video chats to business-grade communications applications. With its ease of integration, WebRTC video has increasingly become a standard component of any application with embedded real-time video. From our own experience, WebRTC has proved to be a versatile real-time video technology that has helped us engineer everything from basic video chats to single-click, in-browser video calls that have been fully integrated into our mature business VoIP platform.

What about video conference calls? In the past, capturing and transmitting video for conferences required each user to download additional software or plugins. But WebRTC video conferencing lets users communicate via instant streaming feeds that rival current video conferencing options in terms of quality and reliability.

Part 5: Cybersecurity

Cybersecurity is a fairly broad umbrella. It encompasses everything from individual password habits to securing a website to an entire industry dedicated to protecting data stored in cyberspace. For us at OnSIP, it means building communications tools that are compliant with existing cybersecurity laws. It means annual cybersecurity training for every employee—from the writers to the engineers with MIT degrees. It means taking care that only people who need access to sensitive information have access. And it means educating our users on best practices so that they can maintain security as well.

We use locks and keys in every aspect of life. When we go out, we lock our doors. Our bank accounts require secondary confirmation like PINs. Our cars may start automatically, but we need to have the key on us. Cybersecurity is the same idea, just for our digital files. We have security measures in place elsewhere because someone might want to break in and steal something—not because our apartments or bank accounts are necessarily a gold mine but because they’re there. Digital data is the same but can cause significant damage in the long run if it falls into the wrong hands. Personal information, financial information, even healthcare information: All of this is valuable to hackers and legitimate data collectors, so it’s imperative that we protect it.

How Cybersecurity Works

At the risk of sounding clichéd, cybersecurity is a team effort. The weakest link is often the one exploited. This is why it’s imperative to educate your entire team, and regularly. One weak password, unsecured WiFi connection, or convincing phishing email is all hackers need to break into your phone system or computer system. Data breaches have financial consequences that can be hard to come back from, and the reputation fallout can be even worse.

Additionally, cyber threats constantly evolve, so cybersecurity measures must adapt accordingly. Think how password norms have changed over the past decade or two. It used to be we could use our pets' names. Then we added numbers and special characters. Now, randomly generated passwords or passphrases are your strongest option.

Types of Cybersecurity

There are a few different ways to ensure that your information stays safe in cyberspace.

Education: The first and most important type of cybersecurity is education. You can’t protect yourself or your business from cyber threats if you don’t understand them. This is why regular cybersecurity training is imperative. Not only does it reinforce the basics, like how to recognize scam emails or why you shouldn’t reuse passwords, but it adds new threats to your knowledgebase on a regular basis.

Passwords: Password security is paramount. Everyone has so many passwords that it’s inevitable someone will reuse or share login credentials. Password managers are a great tool to ensure strong passwords across the board!

VPNs: VPN encryption protects your data while you browse and send info across the Web. If you ever use free WiFi, trust us, you want one.

Firewalls: Firewalls are excellent security tools, and luckily, most devices have them built in. They’re by no means a be all and end all security solution, though. It’s important to know what a firewall can do as well as what it can’t. Firewalls are not the same as anti-virus software, but they are the gatekeeper for traffic to and from your network.

Prevention: Prevention is key. Attempted data breaches are a fact, not a vague threat. Whether or not they’re successful is up to you. Containment is necessary after a breach, and many professionals prefer to focus on that because results are trackable. However, even the most basic prevention efforts can save a company quite a bit financially, let alone peace of mind.

VoIP Network Security: Never assume your cloud service has security perfectly handled. Cloud security architecture should create a strong base, particularly if you choose the right cloud provider. But both cloud tech and cyberthreats constantly evolve, so it’s important to remember that you and everyone in your system are also part of that security architecture—known as a shared security model.

Unfortunately, there’s no standard out there for shared security, so it comes down to individual service-level agreements between the customer and provider. So how do you go about choosing a VoIP provider in light of this knowledge, and what steps should you take in the workplace(s) once you’re set up? Here’s how to choose a secure VoIP provider.

VoIP Phone Security: VoIP phones, like other devices on the Internet, can be targeted by hackers for the purposes of fraud, theft, and other crimes. For a small business, losing thousands of dollars to VoIP fraud can severely damage the bottom line. Thankfully, you can greatly reduce the possibility of these incidents by taking a few simple precautions. By securing key settings on your phones, router, and business phone system, you can prevent intrusions that cost you money, time, and manpower. Here at OnSIP, we’re alerted to unexpected spikes in usage and quickly suspend an account if it has been hacked.

VoIP fraud can severely damage your bottom line, but simple security measures can help prevent intrusions that cost you time and money.

When it comes to VoIP security and cybersecurity as a whole, using strong passwords is particularly crucial. Many VoIP devices and interfaces come with pre-set passwords that are publicly available online. Most phone web interfaces have no automatic lockouts, so attackers can bombard your interface with an unlimited number of keygen passwords. However, a little vigilance can keep your passwords protected.

Part 6: VoIP Glossary

Unless you’re already a VoIP pro, the sheer number of strange terms can pose a seemingly steep learning curve. We promise it’s not that daunting! We’ve broken down the main words and phrases—and a number of the not-so-main ones—that you’ll come across as you dive into the VoIP world to help you pick up the finer details of cloud phone features and functionality in no time.

Automatic Call Distribution (ACD) Queues

ACD queues are an advanced version of ring groups that allow employees to take one call at a time while new callers wait for an available person. Employees log in to the queue to receive calls and log out when they are unavailable or done for the day. This is a great tool for keeping callers on the line instead of constantly getting a busy signal or hanging up because no one answered. Explore more.

Announcements

Announcements play a message to the caller and then immediately forward them on to another address. Announcements may be used for such purposes as playing the hours of operation of your business and then forwarding the caller back to the main attendant menu. Another use may be to play a status message to callers before forwarding them on to your support group, potentially alleviating your support representatives from answering repetitive questions about a known issue currently affecting your business.

API (Application Programming Interface)

APIs are sets of specifications, communication protocols, and tools that help developers build programs. APIs are what allow various apps to communicate and share data with each other, even if written in different languages—it’s the bit of software that sends and receives requests. Explore more.

Auto Attendants & Attendant Menus

A standard feature across the board with VoIP, an auto attendant is simply the automated menu you hear when calling a company: "Press 1 for Sales; Press 2 for Support." It more efficiently funnels calls for employees and gives callers the exact direction they need, cutting down on frustration. Also known as a "virtual receptionist." Explore more.

Business Hour Rules (BHRs)

BHRs allow you to configure your phone system to automatically direct calls based on if you’re open or not, so customers know whether their call will be answered. If you’re open 9 to 5 on weekdays, the call will go to your usual attendant menu. If someone calls during off hours, you can set up an announcement listing your hours or alternate methods of communication. You can even set business hour rules to unify offices in different time zones. Explore more.

.jpg?width=600&name=P2%20(iStock-524150621).jpg)

Business VoIP vs. Consumer VoIP

Even if you don’t know it, you’re probably familiar with consumer VoIP. While VoIP as a proper phone system is primarily a business tool, platforms like FaceTime and Google Voice that use the Internet for free voice and video calls are designed for personal use, so fall into the consumer VoIP category. So do personal calls made through IP hardphones. Business VoIP is much more complex and comprises numerous extra features that companies rely on to communicate efficiently. Explore more.

Busy Lamp Field (BLF)

BLF is a PBX feature that indicates which other lines in your network are busy or available and can even show when a teammate’s phone is ringing. It helps you keep track of which coworkers are free in real-time.

Call Detail Records (CDRs)

CDRs log the metadata on how a number or user is utilizing the phone system. It tracks call length, type of call, date and time, parties involved, etc. CDRs are handy for billing and call reporting, as well as for identifying calling trends. Explore more.

Call Parking

Call parking is like putting someone on hold but with more options and not beholden to a specific phone line. The call gets "parked" (read: put on hold) in the cloud, and anyone from your organization can take it, rather than risk the possibility of transferring to someone away from their desk. Explore more.

Call Transfer

A call transfer occurs when one person relocates a call to another line. There are two types of call transfers: cold and warm. Cold, also known as blind transfers, occur when a call is transferred to another person with no introduction. Warm, also known as attended transfers, occur when there’s a bit of conversation with pertinent information to introduce the call before it is actually transferred. Explore more.

Caller ID

Caller ID is a widely used feature that allows you to see the name and number of an incoming call before you pick it up. Because it’s not regulated by the FCC, caller ID info is regularly outdated or camouflaged so should not be taken as absolute confirmation of the caller’s identity. Explore more.

Cloud Phone System

A cloud phone system is a VoIP-based business phone system hosted by a third-party provider like OnSIP. It’s typically more secure and advanced than regular phone lines. Explore more.

The term “cloud phone system” is often used interchangeably with “business VoIP.”

Cloud phone systems offer advanced security and telephony features, such as extension dialing, auto attendants, and conference bridges.

Communications Platform as a Service (CPaaS)

CPaaS is similar to software as a service (SaaS) but is specific to communications. Based in the cloud, it allows for real-time communication and integrated communications tools without requiring any heavy lifting by developers. Explore more.

Conference Bridge

A conference bridge creates a virtual meeting room for your business, allowing staff members and external parties to call in from wherever they are working. Equipment familiarly known as a “conference bridge” can answer many separate phone calls at the same time and link—or “bridge”—them together. That way, the group of callers can speak and listen to each other as if they were all in the same room. Explore more.

Contact Center as a Service (CCaaS)

Contact center as a service is simply the cloud platform version of your contact center. Much like hosted VoIP and other -aaS options, it removes the upfront setup costs—both time and financial cost. Plus with everything centralized in the cloud, you know your contact center is doing exactly what it’s supposed to do: manage and streamline customer interactions to improve customer experience. Explore more.

Dial by Name Directory

A dial by name directory is a call feature that enables callers to use the DTMF keypads on their phones to find a specific staff member. Once found, the directory will automatically connect the caller to the employee. Explore more.

Direct Inward Dialing (DID)

A telephone service developed for people who have their own PBX system, DID was developed for POTS (plain old telephone system) and is a way for a service provider to allocate a range of numbers to a business without needing a separate physical telephone line for each user. In VoIP, a DID number is assigned to a gateway that allows PSTN calls to directly reach VoIP users with the corresponding DID number, allowing callers to bypass the attendant menu. Explore more.

Disaster Recovery as a Service (DRaaS)

Disaster Recovery as a Service (DRaaS) is a service by third-party providers to help prevent and temper the effects of business disruptions. Explore more.

DTMF Tones

DTMF works by assigning eight different audio frequencies to the rows and columns of the keypad. The columns on the keypad are assigned high-frequency signals, while the rows are assigned low-frequency signals.

When you press a key—which corresponds to a number or symbol—the phone generates a tone that simultaneously combines the high-frequency signal from the column that key is in with the low-frequency signal of the row it’s in. This unique signal pair is then transmitted over telephone wires to the local phone exchange, where the two signals are decoded to determine which numbers you are dialing. Explore more.

Enhanced 911 (E911)

Enhanced 911 simply refers to the extra information, like your location and callback number, that 911 dispatchers receive when you make an emergency call. Because VoIP phones can be used anywhere with an Internet connection, it’s not always clear where a caller is located. This is why service providers require you to enter your location (office, home office, coworking space, etc.) for each phone that needs it so that in case of an emergency, first responders know where to go. Explore more.

.jpg?width=600&name=P2%20(iStock-505069330).jpg)

5G

5G is the latest mobile generation. Introduced in 2018, networks continue to deploy coverage across America throughout 2020. New mobile generations are introduced roughly once a decade; they come with new capabilities and standards to differentiate from the previous generation. The last generation, 4G, introduced the Internet on mobile devices (and coincided with Apple’s first iPhone launch).

5G has incredibly faster speeds that allow Internet connectivity to expand from typical mobile devices to all things in the IoT (Internet of Things). It’s unclear exactly how this new generation will impact innovation, but early rumblings include talk of increased automation and machine learning (ML), remote surgery, and smart cities. Explore more.

5 Nines

The term five 9s refers to system and network availability. It’s a metric that measures uptime—that is, how often your system is up and running. Typically it’s measured in percentage of availability per year, usually ranging from 99% to 99.999% of the time. Explore more.

HD Voice

HD voice describes a high-definition audio call. You may hear a lot of jargon behind the technical specs of voice quality, like "codec" and "frequency" and "wideband," but suffice to say that any device that supports HD voice (which is most non-landline devices) is compatible with OnSIP. Explore more.

Hold Music